In our previous blog post, we explored the process of containerizing the Large Language Model (LLM) from Hugging Face using FastAPI and Docker. The next step is deploying this containerized application on a Kubernetes cluster. Additionally, I'll share my observations and insights gathered during this exercise.

You can access the deployment yaml spec and detailed instructions in my GitHub repo:

https://github.com/vineethac/huggingface/tree/main/6-deploy-on-k8s

Requirements

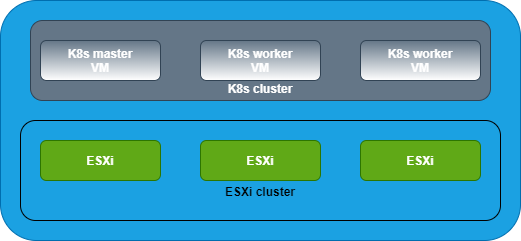

- I am using a Tanzu Kubernetes Cluster (TKC).

- Each node is of size best-effort-2xlarge which has 8 vCPU and 64Gi of memory.

❯ KUBECONFIG=gckubeconfig k get node NAME STATUS ROLES AGE VERSION tkc01-control-plane-49jx4 Ready control-plane,master 97d v1.23.8+vmware.3 tkc01-control-plane-m8wmt Ready control-plane,master 105d v1.23.8+vmware.3 tkc01-control-plane-z6gxx Ready control-plane,master 97d v1.23.8+vmware.3 tkc01-worker-nodepool-a1-pqq7j-dc6957d97-8gjn8 Ready <none> 21d v1.23.8+vmware.3 tkc01-worker-nodepool-a1-pqq7j-dc6957d97-c9nfq Ready <none> 21d v1.23.8+vmware.3 tkc01-worker-nodepool-a1-pqq7j-dc6957d97-cngff Ready <none> 21d v1.23.8+vmware.3 ❯

- I've attached 256Gi storage volumes to the worker nodes that is mounted at /var/lib/containerd. The worker nodes on which these llm pods are running should have enough storage space. Otherwise you may notice these pods getting stuck/ restarting/ unknownstatus. If the worker nodes run out of the storage disk space, you will see pods getting evicted with warnings The node was low on resource: ephemeral-storage. TKC spec is available in the above mentioned Git repo.

Deployment

- The deployment and service yaml spec are given in fastapi-llm-app-deploy-cpu.yaml.

- This works on a CPU powered Kubernetes cluster. Additional configurations might be required if you want to run this on a GPU powered cluster.

- We have already instrumented the Readiness and Liveness functionality in the LLM app itself.

- The readiness probe invokes the

/healthzendpoint exposed by the FastAPI app. This will make sure the FastAPI itself is healthy/ responding to the API calls.

- The liveness probe invokes

liveness.pyscript within the app. The script invokes the/askendpoint which interacts with the LLM and returns the response. This will make sure the LLM is responding to the user queries. For some reason if the llm is not responding/ hangs, the liveness probe will fail and eventually it will restart the container.

- You can apply the deployment yaml spec as follows:

❯ KUBECONFIG=gckubeconfig k apply -f fastapi-llm-app-deploy-cpu.yamlValidation

❯ KUBECONFIG=gckubeconfig k get deploy fastapi-llm-app NAME READY UP-TO-DATE AVAILABLE AGE fastapi-llm-app 2/2 2 2 21d ❯ ❯ KUBECONFIG=gckubeconfig k get pods | grep fastapi-llm-app fastapi-llm-app-758c7c58f7-79gmq 1/1 Running 1 (71m ago) 13d fastapi-llm-app-758c7c58f7-gqdc6 1/1 Running 1 (99m ago) 13d ❯ ❯ KUBECONFIG=gckubeconfig k get svc fastapi-llm-app NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE fastapi-llm-app LoadBalancer 10.110.228.33 10.216.24.104 5000:30590/TCP 5h24m ❯

Now you can just do a curl against the EXTERNAL-IP of the above mentioned fastapi-llm-app service.

❯ curl http://10.216.24.104:5000/ask -X POST -H "Content-Type: application/json" -d '{"text":"list comprehension examples in python"}'

In our next blog post, we'll try enhancing our FastAPI application with robust instrumentation. Specifically, we'll explore the process of integrating FastAPI metrics into our application, allowing us to gain valuable insights into its performance and usage metrics. Furthermore, we'll take a look at incorporating traces using OpenTelemetry, a powerful tool for distributed tracing and observability in modern applications. By leveraging OpenTelemetry, we'll be able to gain comprehensive visibility into the behavior of our application across distributed systems, enabling us to identify performance bottlenecks and optimize resource utilization.

Hope it was useful. Cheers!

.jpeg)

.jpeg)